Anatomy of a CPU

The CPU is ofttimes called the brains of a computer, and just similar the human encephalon, it consists of several parts that work together to process information. In that location are parts that have in data, parts that store information, parts that procedure information, parts that assist output data, and more. In today's explainer, nosotros'll go over the key elements that make up a CPU and how they all piece of work together to ability your calculator.

You should know, this article is role of our Anatomy series that dissects all the tech behind PC components. We too have a dedicated series to CPU Pattern that goes deeper into the CPU design process and how things work internally. It'southward a highly recommended technical read. This anatomy article will revisit some of the fundamentals from the CPU series, just at a higher level and with additional content.

Compared to previous articles in our Anatomy series, this i will inevitably be more than abstract. When yous're looking inside something like a ability supply, you tin can clearly see the capacitors, transformers, and other components. That'due south simply non possible with a mod CPU since everything is so tiny and considering Intel and AMD don't publicly disembalm their designs. Most CPU designs are proprietary, so the topics covered in this article represent the full general features that all CPUs have.

And so allow's swoop in. Every digital system needs some form of a Central Processing Unit of measurement. Fundamentally, a developer writes code to exercise whatever their task is, and then a CPU executes that code to produce the intended result. The CPU is also connected to other parts of a system similar retention and I/O to help keep it fed with the relevant data, only we won't cover those systems today.

The CPU Blueprint: An ISA

When analyzing any CPU, the commencement matter yous'll come beyond is the Education Fix Architecture (ISA). This is the figurative design for how the CPU operates and how all the internal systems collaborate with each other. Just similar in that location are many breeds of dogs inside the same species, in that location are many different types of ISAs a CPU can be congenital on. The 2 about mutual types are x86 (constitute in desktops and laptops) and ARM (found in embedded and mobile devices).

There are some others like MIPS, RISC-Five, and PowerPC that have more niche applications. An ISA will specify what instructions the CPU tin can process, how it interacts with retentivity and caches, how piece of work is divided in the many stages of processing, and more.

To cover the main portions of a CPU, we'll follow the path an didactics takes as it is executed. Unlike types of instructions may follow dissimilar paths and utilise different parts of a CPU, but we'll generalize hither to cover the biggest parts. We'll start with the near basic design of a single-core processor and gradually add complication every bit we get towards a more modern design.

Command Unit and Datapath

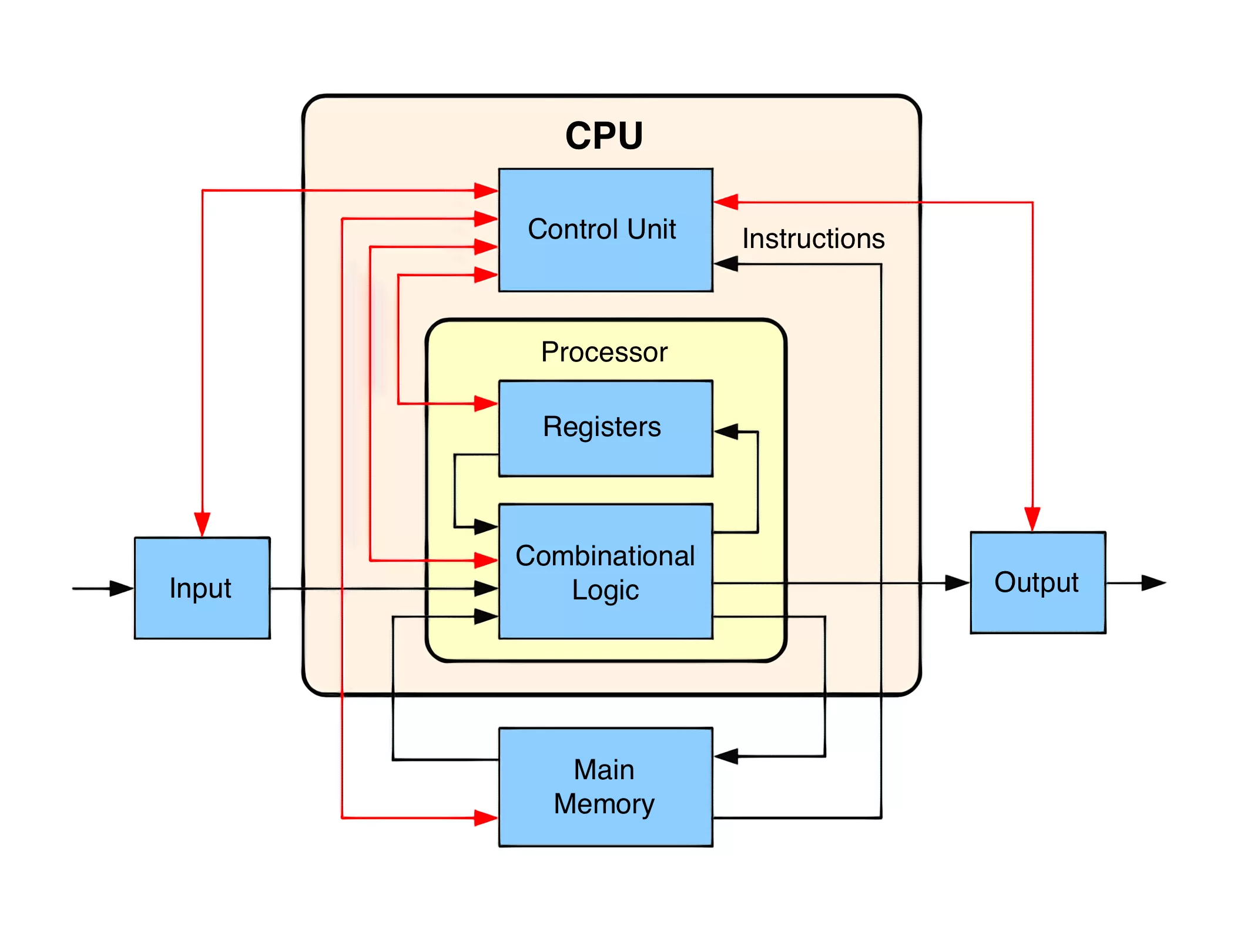

The parts of a CPU can be divided into two: the command unit and the datapath. Imagine a railroad train machine. The engine is what moves the train, but the conductor is pulling the levers backside the scenes and decision-making the dissimilar aspects of the engine. A CPU is the same way.

The datapath is like the engine and as the name suggests, is the path where the information flows every bit it is processed. The datapath receives the inputs, processes them, and sends them out to the right place when they are done. The command unit tells the datapath how to operate like the usher of the train. Depending on the instruction, the datapath will road signals to dissimilar components, turn on and off different parts of the datapath, and monitor the land of the CPU.

The Instruction Cycle - Fetch

The offset matter our CPU must do is figure out what instructions to execute side by side and transfer them from memory into the CPU. Instructions are produced past a compiler and are specific to the CPU's ISA. ISAs volition share most common types of instructions like load, store, add, decrease, etc, merely there many be additional special types of instructions unique to each item ISA. The control unit of measurement volition know what signals need to be routed where for each type of pedagogy.

When y'all run a .exe on Windows for example, the lawmaking for that program is moved into memory and the CPU is told what accost the first instruction starts at. The CPU e'er maintains an internal annals that holds the memory location of the side by side instruction to exist executed. This is called the Program Counter (PC).

Once it knows where to start, the first pace of the instruction cycle is to go that teaching. This moves the educational activity from retention into the CPU's instruction annals and is known as the Fetch stage. Realistically, the instruction is likely to be in the CPU'southward cache already, but we'll cover those details in a flake.

The Pedagogy Bicycle - Decode

When the CPU has an pedagogy, information technology needs to effigy out specifically what blazon of pedagogy it is. This is called the Decode stage. Each didactics will have a certain prepare of bits called the Opcode that tells the CPU how to interpret it. This is similar to how unlike file extensions are used to tell a computer how to interpret a file. For instance, .jpg and .png are both paradigm files, merely they organize data in a dissimilar way and so the computer needs to know the blazon in order to properly translate them.

Depending on how complex the ISA is, the instruction decode portion of the CPU may become complex. An ISA like RISC-5 may simply have a few dozen instructions while x86 has thousands. On a typical Intel x86 CPU, the decode process is one of the nearly challenging and takes up a lot of space. The most common types of instructions that a CPU would decode are memory, arithmetic, or co-operative instructions.

iii Main Instruction Types

A memory pedagogy may be something similar "read the value from memory address 1234 into value A" or "write value B to memory address 5678". An arithmetic education might exist something similar "add together value A to value B and store the result into value C". A branch instruction might exist something like "execute this lawmaking if value C is positive or execute that lawmaking if value C is negative". A typical program may chain these together to come up with something like "add the value at retention accost 1234 to the value at memory address 5678 and store information technology in retention address 4321 if the outcome is positive or at address 8765 if the issue is negative".

Earlier we starting time executing the instruction we just decoded, we need to pause for a moment to talk about registers.

A CPU has a few very small simply very fast pieces of memory chosen registers. On a 64-bit CPU these would hold 64 bits each and in that location may be but a few dozen for the core. These are used to store values that are currently being used and tin can be considered something like an L0 cache. In the pedagogy examples higher up, values A, B, and C would all be stored in registers.

The ALU

Dorsum to the execution stage now. This will be different for the 3 types of instructions nosotros talked about higher up, so we'll comprehend each one separately.

Starting with arithmetic instructions since they are the easiest to understand. These type of instructions are fed into an Arithmetic Log Unit (ALU) for processing. An ALU is a circuit that typically takes two inputs with a control betoken and outputs a upshot.

Imagine a bones calculator you used in middle school. To perform an performance, you type in the two input numbers likewise as what type of operation you want to perform. The reckoner does the computation and outputs the upshot. In the case of our CPU's ALU, the type of operation is determined by the instruction's opcode and the control unit of measurement would ship that to the ALU. In add-on to basic arithmetics, ALUs can besides perform bitwise operations like AND, OR, NOT, and XOR. The ALU will also output some status info for the control unit well-nigh the calculation information technology has but completed. This could include things like whether the result was positive, negative, zero, or had an overflow.

An ALU is most associated with arithmetic operations, just it may likewise be used for retentiveness or branch instructions. For example, the CPU may demand to calculate a memory address given every bit the result of a previous arithmetic functioning. It may as well demand to calculate the beginning to add to the plan counter that a branch education requires. Something like "if the previous upshot was negative, jump ahead 20 instructions."

Memory Instructions and Hierarchy

For memory instructions, we'll need to empathise a concept called the Memory Bureaucracy. This represents the human relationship betwixt caches, RAM, and principal storage. When a CPU receives a retention pedagogy for a piece of data that it doesn't yet have locally in its registers, information technology will go down the memory hierarchy until it finds information technology. Almost modern CPUs contain iii levels of cache: L1, L2, and L3. The first identify the CPU volition check is the L1 cache. This is the smallest and fastest of the three levels of cache. The L1 cache is typically split into a portion for data and a portion for instructions. Recall, instructions need to exist fetched from memory but similar data.

A typical L1 cache may be a few hundred KB. If the CPU can't discover what information technology'southward looking for in the L1 enshroud, it will check the L2 enshroud. This may be on the order of a few MB. The next footstep is the L3 cache which may be a few tens of MB. If the CPU tin't find the data information technology needs in the L3 cache, it will go to RAM and finally main storage. As we get down each step, the bachelor infinite increases past roughly an guild of magnitude, but so does the latency.

In one case the CPU finds the data, it will bring it up the bureaucracy so that the CPU has fast access to it if needed in the future. There are a lot of steps here, but it ensures that the CPU has fast access to the data information technology needs. For case, the CPU tin read from its internal registers in just a wheel or two, L1 in a handful of cycles, L2 in ten or so cycles, and the L3 in a few dozen. If it needs to go to memory or main storage, those could take tens of thousands or even millions of cycles. Depending on the organization, each core will likely have its own individual L1 enshroud, share an L2 with ane other core, and share an L3 amongst groups four or more cores. We'll talk more most multi-cadre CPUs later in this commodity.

Co-operative and Jump Instructions

The last of the three major teaching types is the branch instruction. Modern programs spring effectually all the time and a CPU will rarely always execute more than than a dozen contiguous instructions without a branch. Branch instructions come from programming elements like if-statements, for-loops, and return-statements. These are all used to interrupt the program execution and switch to a dissimilar part of the lawmaking. There are also jump instructions which are branch instructions that are ever taken.

Conditional branches are especially tricky for a CPU since it may be executing multiple instructions at once and may non determine the effect of a branch until after information technology has started on subsequent instructions.

In order to fully understand why this is an issue, we'll need to take another diversion and talk most pipelining. Each step in the instruction cycle may take a few cycles to complete. That ways that while an educational activity is being fetched, the ALU would otherwise exist sitting idle. To maximize a CPU'southward efficiency, nosotros divide each stage in a procedure called pipelining.

The classic way to empathize this is through an analogy to doing laundry. You lot have two loads to practise and washing and drying each take an hr. You could put the beginning load in the washer then the dryer when it's washed, and then start the second load. This would accept four hours. However, if you divided the piece of work and started the second load washing while the first load was drying, y'all could go both loads washed in three hours. The one hr reduction scales with the number of loads y'all have and the number of washers and dryers. It still takes ii hours to do an individual load, but the overlap increases the full throughput from 0.v loads/hr to 0.75 loads/hr.

CPUs use this same method to improve education throughput. A modern ARM or x86 CPU may take 20+ pipeline stages which ways at whatsoever given betoken, that core is processing twenty+ different instructions at once. Each design is unique, only one sample division may be 4 cycles for fetch, 6 cycles for decode, three cycles for execute, and 7 cycles for updating the results back to memory.

Dorsum to branches, hopefully y'all tin start to run across the outcome. If nosotros don't know that an instruction is a branch until bike 10, we will have already started executing 9 new instructions that may be invalid if the branch is taken. To get around this consequence, CPUs have very circuitous structures called branch predictors. They use similar concepts from machine learning to try and judge if a branch volition be taken or not. The intricacies of branch predictors are well across the scope of this commodity, but on a basic level, they track the status of previous branches to larn whether or non an upcoming branch is likely to be taken or non. Modernistic branch predictors can have 95% accurateness or college.

Once the result of the branch is known for sure (it has finished that phase of the pipeline), the program counter will be updated and the CPU will go on to execute the next instruction. If the branch was mispredicted, the CPU will throw out all the instructions after the branch that it mistakenly started to execute and start up once more from the right place.

Out-Of-Order Execution

Now that we know how to execute the three most common types of instructions, let's take a look at some of the more avant-garde features of a CPU. About all modernistic processors don't actually execute instructions in the social club in which they are received. A image called out-of-society execution is used to minimize downtime while waiting for other instructions to terminate.

If a CPU knows that an upcoming didactics requires data that won't exist ready in time, it can switch the instruction lodge and bring in an contained instruction from afterward in the program while it waits. This didactics reordering is an extremely powerful tool, simply it is far from the only trick CPUs utilise.

Another performance improving feature is chosen prefetching. If you were to time how long it takes for a random instruction to complete from kickoff to end, you'd find that the memory access takes up most of the time. A prefetcher is a unit of measurement in the CPU that tries to look ahead at future instructions and what data they will require. If it sees one coming that requires data that the CPU doesn't accept cached, it will reach out to the RAM and fetch that information into the enshroud. Hence the proper noun pre-fetch.

Accelerators and the Future

Some other major feature starting to be included in CPUs are task-specific accelerators. These are circuits whose entire task is perform one small-scale chore every bit fast as possible. This might include encryption, media encoding, or machine learning.

The CPU can do these things on its own, only information technology is vastly more efficient to take a unit dedicated to them. A great example of this is onboard graphics compared to a dedicated GPU. Surely the CPU can perform the computations needed for graphics processing, but having a dedicated unit for them offers orders of magnitude amend performance. With the rise of accelerators, the bodily core of a CPU may only have up a small fraction of the chip.

The picture below shows an Intel CPU from several years back. Most of the infinite is taken up by cores and enshroud. The 2d picture beneath it is for a much newer AMD chip. Well-nigh of the space there is taken upward by components other than the cores.

Going Multicore

The last major feature to cover is how we can connect a bunch of private CPUs together to form a multicore CPU. Information technology'due south not as elementary every bit simply putting multiple copies of the single cadre blueprint nosotros talked well-nigh earlier. Just like in that location'south no easy manner to turn a unmarried-threaded programme into a multi-threaded program, the same concept applies to hardware. The issues come up from dependence between the cores.

For, say, a 4-core pattern, the CPU needs to be able to outcome instructions 4 times as fast. It also needs four split up interfaces to retentiveness. With multiple entities operating on potentially the same pieces of data, problems like coherence and consistency must be resolved. If ii cores were both processing instructions that used the same data, how do they know who has the right value? What if 1 core modified the data but it didn't reach the other core in time for information technology to execute? Since they accept separate caches that may store overlapping data, complex algorithms and controllers must be used to remove these conflicts.

Proper co-operative prediction is also extremely important every bit the number of cores in a CPU increases. The more than cores are executing instructions at one time, the higher the likelihood that one of them is processing a branch instruction. This means the educational activity flow may change at any time.

Typically, split cores will process teaching streams from different threads. This helps reduce the dependence between cores. That's why if you check Task Director, you lot'll oftentimes run across ane cadre working difficult and the others inappreciably working. Many programs aren't designed for multithreading. There may also be sure cases where it's more efficient to have one core practise the work rather than pay the overhead penalties of trying to divide upwards the piece of work.

Concrete Design

Most of this article has focused on the architectural pattern of a CPU since that's where most of the complexity is. However, this all needs to be created in the real world and that adds another level of complexity.

In lodge to synchronize all the components throughout the processor, a clock betoken is used. Modernistic processors typically run between 3.0GHz and five.0GHz and that hasn't seemed to change in the past decade. At each of these cycles, the billions of transistors inside a chip are switching on and off.

Clocks are disquisitional to ensure that as each phase of the pipeline advances, all the values show upward at the correct time. The clock determines how many instructions a CPU tin process per 2nd. Increasing its frequency through overclocking will make the chip faster, simply will also increase power consumption and heat output.

Heat is a CPU's worst enemy. Equally digital electronics heat upwardly, the microscopic transistors can start to degrade. This can lead to damage in a chip if the heat is not removed. This is why all CPUs come up with heat spreaders. The actual silicon die of a CPU may only take up 20% of the expanse of a physical device. Increasing the footprint allows the rut to be spread more evenly to a heatsink. It also allows more pins for interfacing with external components.

Modern CPUs can have a yard or more input and output pins on the back. A mobile bit may only take a few hundred pins though since most of the computing parts are within the chip. Regardless of the design, around half of them are devoted to power commitment and the residue are used information communications. This includes communication with the RAM, chipset, storage, PCIe devices, and more. With high performance CPUs cartoon a hundred or more amps at full load, they need hundreds of pins to spread out the current depict evenly. The pins are usually gilded plated to meliorate electrical conductivity. Unlike manufacturers apply different arrangements of pins throughout their many product lines.

Putting It All Together with an Case

To wrap things up, nosotros'll accept a quick wait at the pattern of an Intel Core two CPU. This is from way back in 2006, and then some parts may be outdated, but details on newer designs are not available.

Starting at the top, we have the instruction cache and ITLB. The Translation Lookaside Buffer (TLB) is used to help the CPU know where in memory to become to find the instruction it needs. Those instructions are stored in an L1 instruction cache and are then sent into a pre-decoder. The x86 architecture is extremely complex and dumbo so there are many steps to decoding. Meanwhile, the branch predictor and prefetcher are both looking ahead for whatsoever potential bug caused past incoming instructions.

From in that location, the instructions are sent into an instruction queue. Call up dorsum to how the out-of-guild pattern allows a CPU to execute instructions and choose the most timely i to execute. This queue holds the current instructions a CPU is considering. Once the CPU knows which educational activity would be the all-time to execute, it is further decoded into micro-operations. While an educational activity might contain a complex task for the CPU, micro-ops are granular tasks that are more hands interpreted by the CPU.

These instructions then go into the Register Alias Tabular array, the ROB, and the Reservation Station. The exact function of these three components is a bit complex (think graduate level university form), just they are used in the out-of-lodge process to assist manage dependencies betwixt instructions.

A single "core" will actually have many ALUs and retentiveness ports. Incoming operations are put into the reservation station until an ALU or memory port is available for use. Once the required component is available, the instruction will exist processed with the help from the L1 data cache. The output results volition be stored and the CPU is now fix to starting time on the next instruction. That'due south about it!

While this article was not meant to be a definitive guide to exactly how every CPU works, it should give yous a proficient idea of their inner workings and complexities. Frankly, no i outside of AMD and Intel actually know how their CPUs work. Each section of this article represents an unabridged field of enquiry and development and so the information presented here just scratches the surface.

Keep Reading

If you lot are interested in learning more about how the various components covered in this commodity are designed, check out Part 2 of our CPU pattern series. If you're more interested in learning how a CPU is physically made down to the transistor and silicon level, bank check out Function three.

Shopping Shortcuts:

- AMD Threadripper 3990X on Amazon

- AMD Ryzen 9 3950X on Amazon

- AMD Ryzen 7 3700X on Amazon

- AMD Ryzen five 3600 on Amazon

- Intel Core i9-9900K on Amazon

Source: https://www.techspot.com/article/2000-anatomy-cpu/

Posted by: johnsonfately.blogspot.com

0 Response to "Anatomy of a CPU"

Post a Comment